Alan Mathison Turing was one of the leading scientific minds of his time. He cracked German codes in World War II and is widely regarded as the father of the modern computer. But he wasn’t simply driven by the challenge of solving problems. It was the sudden death of his close friend Christopher Morcom, when Turing was just 17, that profoundly shifted his view of the world. It underpinned many of his greatest discoveries and led him towards his biggest challenge – to build a human brain.

The death of Morcom in 1930, an intellectual companion for Turing, led him to consider how the human brain worked. He came to the conclusion that it was simply a machine with a series of logic gates – on/off switches – that underpin all our thought processes. As such, he believed there was nothing that couldn’t be replicated in a man-made machine. In other words, we could build an artificial brain.

Turing died before he achieved this goal. But now, 100 years after his birth, some scientists are daring to think they might be able to recreate the body’s most complex organ. Their plan isn’t to build a brain out of the 86 billion nerve cells, or neurones, that make up our brains. Instead, they plan to recreate a brain in digital form – using software, silicon and wires.

One of the most ambitious of these initiatives is the Human Brain Project, led by Professor Henry Markram of École Polytechnique Fédérale de Lausanne in Switzerland. His plan is to integrate everything that is known about the brain, from the molecular level up through to its large-scale anatomical structure, in one working model that resides in a supercomputer. It’s a huge challenge requiring big money. In January 2013, Markram’s proposal was selected as one of the European Commission's FET Flagship projects, with a cost estimated at over €1 billion (£0.8 billion).

To build the artificial brain, models of all the processes that go on inside the real thing would need to be encoded in software and brought together so they can interact. The hope is that the resulting ‘unified model’ would provide insights into what makes us tick – exactly how our thoughts and behaviour come about.

“We know a lot about the brain and we have very good knowledge about its components,” says the brain project’s spokesman Richard Walker, “but our systematic knowledge of how they work together is weak.

“We can put information about the behaviour of individual cars into a computer and generate a simulation that will predict traffic jams. In the same way, simulating how the brain’s components of the brain behave and interact could give us an overall picture of how they all work together. What the brain actually does in life will emerge from the interactions of these lower-level parts.”

Mind-boggling complexity

It’s not going to be an easy task. The brain’s 86 billion neurones form something like one quadrillion synapses (that’s one thousand billion), which are being modified continuously, countless times every second. Simulating this complexity would involve processing a huge data set, the size of which approaches that of all the stored information in the world. This would require at least an exaflop of computing power, executing roughly a trillion operations per second. Even today’s most powerful supercomputers aren’t up to this gargantuan task.

But Markram has a good track record in the field. In 2008, he and his colleagues used IBM’s Blue Gene supercomputer to simulate a tube-shaped column of neurones in a rat brain, a column containing 10,000 nerve cells with 30 million connections, or synapses, between them. Now, they have simulated 100 such columns.

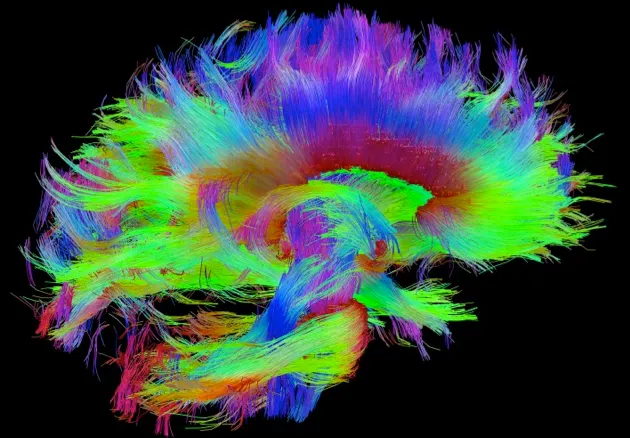

Markram’s team will generate the human brain simulation using some of their own data. In 2012, for example, they published details about the combination of ion channels expressed in several types of neurones in the rat brain. Data from outside sources, such as the Human Connectome Project in which the major pathways of neurones in the brain are being plotted, will be built into the simulation too.

As well as providing new insights into the workings of the brain, Walker believes the resulting simulation could lead to new treatments for diseases. “If we know that schizophrenia is due to some molecular defect, we can simulate that in the model and then do experiments that are otherwise impossible to do in the lab,” he says.

And the benefits would not be limited to medicine. “The human brain is amazing. Every day, it absorbs the same amount of energy as a light bulb. It learns by itself without being programmed and can run for more than 70 years without serious defects,” says Walker. “If we could imitate the brain to build machines with some of these capabilities, we could revolutionise information and communications technology.”

Markram's project brings together scientists from more than 100 international research centres working in neuroscience, robotics, genetics, and mathematics. However, many researchers have criticised the project, arguing that it is ill-conceived and over-ambitious. One of the most outspoken critics is neuroscientist Professor Rodney Douglas of the Institute of Neuroinformatics in Zürich. “A description of the brain is not an explanation of how it works,” he says. “It doesn’t follow that a better understanding of the brain will necessarily emerge from encoding its detailed organisation in a computer. The claim that a theory of brain function would emerge from a simulation is very extravagant.”

A different approach is being taken by Dr Dharmendra Modha who works for IBM in the US and runs the global SyNAPSE project. Instead of building a brain using software, his team plans to build its components using hardware – reproducing the organ’s structures in silicon. And where Markram and his team’s primary aim is to understand how the brain works, Modha is more interested in mimicking the brain to build truly intelligent machines that can program themselves and learn from experience.

Hard-wired brain

Modha’s approach, known as neuromorphic computing, was pioneered by Professor Carver Mead of the California Institute of Technology. Working with PhD student Misha Mahowald, he developed the first neuromorphic device in the late 1980s – a silicon retina in which electrical circuits mimic the function of the eye’s rod and cone cells.

Neuromorphic computing promises to provide supercomputing performance at an affordable price by overcoming the constraints of conventional computers. Developed in the 1940s by early computer scientist John Von Neumann and others, conventional computer architecture separates the central processing unit from the memory, so that information has to be shuttled back and forth between the two through another component called a bus. Packets of information are processed one at a time and sent to the memory individually. This creates a processing bottleneck that uses lots of energy, slowing the computer.

Crucially, neuromorphic chips integrate the computational units and the memory components on the same silicon circuit and process multiple pieces of information in parallel, more closely resembling the brain – increasing processing speed and reducing the energy required. “Von Neumann architectures are very good at calculations and repetitive tasks, but the brain is fundamentally different,” says Ton Engbersen, a senior researcher at IBM’s Zurich lab who is involved with the SyNAPSE project. “It’s very good at interpreting what we see and all other kinds of what we call ‘unstructured data’.”

In 2011, Modha’s global team of scientists unveiled the most sophisticated neuromorphic chip yet: a ‘neurosynaptic core’ consisting of 256 neurones and more than 260,000 synapses replicated in silicon. The ‘weight’, or strength, of each synapse can be pre-programmed into the simulation before it is run, enabling the chip to perform navigation and pattern-recognition tasks.

“I believe there’s an algorithm for how the brain learns, although we don’t know that yet,” says Engbersen. “We are trying to build the next generation of computers that can process information and learn in the same way as the brain.” In the long run, the IBM team is planning to develop a human-scale system containing one quadrillion synapses distributed across multiple neurosynaptic core chips.

But while most neuroscientists agree that the mind is an emergent property of the brain, it’s not clear whether something like human intelligence would emerge from one of these artificial brains.

Dr Kwabena Boahen, principal investigator at Stanford University’s Brains in Silicon research group, believes it would. “How else could intelligence emerge? It has to come out of stuff we can build. The physics of how neurones work is very similar to the physics of transistors. As we learn more about the brain, we can replicate its functions.” Turing would have undoubtedly agreed.

Boahen and his colleagues have built a neuromorphic supercomputer that rivals the performance of IBM’s Blue Gene while consuming 100,000 times less energy. They are using it to run a real-time simulation of a million neurones interconnected with something in the order of a billion synapses. The aim is to understand how the brain decides where to focus attention and how we choose what to do – how cognition happens through the actions of neurones.

“The brain is not made out of some alien technology,” says Boahen, “and there’s nothing going on in there that we can’t replicate in silicon. We know a lot about how individual neurones and synapses work. It’s a matter of figuring out how complex phenomena emerge when lots of them are hooked up to each other.”

Melanie Mitchell, Professor of Computer Science at Portland State University in the US, agrees that the brain could eventually be replicated, but argues that our knowledge of how it functions is woefully inadequate. “In principle, we can replicate anything the brain does,” she says. “But in practice it’s going to be very difficult. We don’t yet know enough about the brain to say what its core algorithms are.”

Fathoming the brain

New research is revealing just how little we understand about our most complex organ. After British physiologists Alan Hodgkin and Andrew Huxley discovered that nervous impulses are generated by the flow of ions, or charged atoms, in 1952, we came to view neurones as computational units that function as binary switches, existing in either an ‘on’ or ‘off’ state. In recent years, however, neuroscientists have come to realise that neurones are more complex. Rather than being binary switches, they exist in many different states. And the nervous impulses that travel along them can move in both directions, not just one.

No-one – not even Markram and Modha – believes that building an artificial brain is going to be easy. To a large degree, the human brain is a black box filled with many smaller black boxes: after a century of investigation, the workings of some of these boxes remains a mystery. But while the prospects of completely achieving Turing’s ultimate aim still seem slim, just like Turing, the lessons we can learn as we strive to achieve this goal could be revolutionary.

Moheb Costandi is a molecular and developmental neurobiologist turned science writer

Follow Science Focus onTwitter,Facebook, Instagramand Flipboard