The year 2010 doesn’t sound like it was that long ago, but technology moves fast. A decade ago Tinder, Uber and Instagram didn’t exist. No one wore wearables, nobody talked to their gadgets at home and the Tesla was just an idea.

Back then, scientists were still looking for the Higgs Boson, Pluto was a mysterious blurry orb just out of sight and genetic editing was still just a theoretical concern, not a practical one.

The next decade looks set to move even faster. So here’s our tour of the new science and tech trends to look out for this decade.

1

Synthetic media will undermine reality

The entertainment world will literally create the next generation of stars.

You know about deepfake technology, where someone’s face is switched into an existing video scene. But deepfakes are just the tip of the iceberg when it comes to synthetic media – a much wider phenomenon of super-realistic, artificially generated photos, text, sound and video that seems destined to shake our notions of what is actually ‘real’ over the next decade.

Take a look at thispersondoesnotexist.com. Hit refresh a few times. None of the faces you see are real. Uncannily realistic, they are entirely synthetic – generated by generative adversarial networks, the same type of artificial intelligence behind many deepfakes.

These false photos show just how far synthetic media has come in the past few years. Elsewhere, China’s Xinhua state news agency has provided an insight into possible uses of synthetic media – computer-generated news anchors. While the results are a little clunky, it suggests a direction where things may be heading.

While such synthetic media has potential for an explosion in creativity, it also has the potential for harm, by providing purveyors of fake news and state-sponsored misinformation new, highly malleable channels of communication.

Read more about future technology:

- Future technology: 22 ideas about to change our world

- Dude, where’s my flying car? 11 future technologies we’re still waiting for

- Exciting new green technology of the future

2

There will be a revolution in cloud robotics

A global network of machines talking and learning from one another (sound familiar?) could create robo-butlers.

Until now, robots have carried their pretty feeble brains inside them. They’ve received instructions – such as rivet this, or carry that – and done it. Not only that, but they’ve worked in environments such as factories and warehouses specially designed or adapted for them.

Cloud robotics promises something entirely new; robots with super-brains stored in the online cloud. The thinking is that these robots, with their intellectual clout, will be more flexible in the jobs they do and the places they can work, perhaps even speeding up their arrival in our homes.

Google Cloud and Amazon Cloud both have robot brains that are learning and growing inside them. The dream behind cloud robotics is to create robots that can see, hear, comprehend natural language and understand the world around them.

One of the leading players in cloud robotics research is Robo Brain, a project led by researchers at Stanford and Cornell universities in the US. Funded by Google, Microsoft, government institutions and universities, the team are building a robot brain on the Amazon cloud, learning how to integrate different software systems and different sources of data.

Another one to watch is the Everyday Robot Project, by X, the ‘moonshot factory’ at Alphabet, Google’s parent company. The project aims to develop robots intelligent enough to make sense of the places we live and work. They’re making headway too – testing cloud robots in Alphabet offices in Northern California. So far, the tasks are simple, such as sorting the recycling (pretty slowly says X), but it’s the shape of robots to come.

3

Diseases will be edited out of our DNA

The gene-editing tool CRISPR could finally treat disease at the genetic level.

The birth of the world’s first gene-edited babies caused uproar in 2018. The twin girls whose genomes were tinkered with during IVF procedures had their DNA altered using the gene-editing technology CRISPR, to protect them from HIV. CRISPR uses a bacterial enzyme to target and cut specific DNA sequences.

Chinese researcher, He Jiankui, who led the work, was sent to prison for disregarding safety guidelines and failing to obtain informed consent.

But in ethically sound studies, CRISPR is poised to treat life-threatening conditions. Before the controversy, Chinese scientists injected CRISPR-edited immune cells into a patient to help them fight lung cancer.

By 2018, two US trials using similar techniques in different kinds of cancer patients were up and running, with three patients reported to have received their edited immune cells back.

Gene-editing is also being tested as a treatment for inherited blood disease sickle cell anaemia, an ongoing trial will collect and edit stem cells from patients’ own blood.

4

We will begin to see living machines

Biological robots could start solving our problems.

Synthetic biologists have been redesigning life for decades now, but so far they’ve mostly been messing about with single cells – a kind of souped-up version of genetic modification.

In 2010, Craig Venter and his team created the first synthetic cell, based on a bug that infects goats. Four years later, one of the first products of the synthetic biology era hit the market, when the drug company Sanofi started selling malaria drugs made by re-engineered yeast cells.

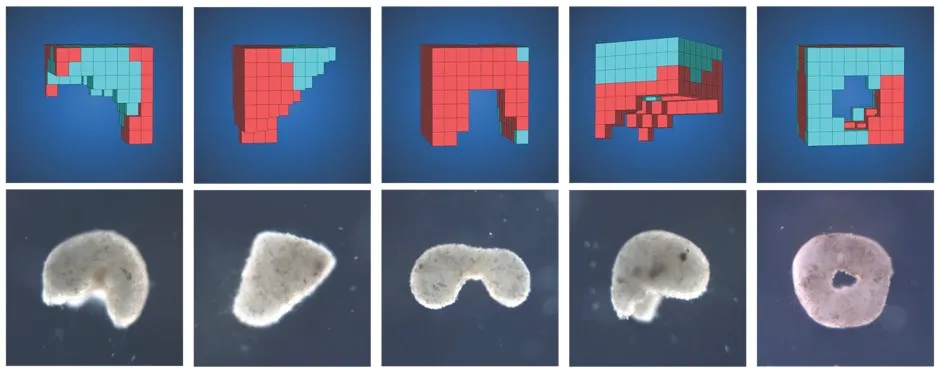

Today, though, biologists are starting to find ways to organise single cells into collectives capable of performing simple tasks. They’re tiny machines, or as biologist Josh Bongard at the University of Vermont refers to them, ‘xenobots’. The idea is to ‘piggyback’ on the hard work of nature, which has been building tiny machines for billions of years.

Currently, Bongard’s team makes its xenobots with ordinary skin and heart cells from frog embryos, producing machines based on designs etched out on a super-computer. Just by combining these two types of cells it designed machines capable of crawling across the bottom of a petri dish, pushing a small pellet around and even cooperating.

“If you build a bunch of these xenobots and sprinkle the petri dish with pellets, in some cases they act like little sheepdogs and push these pellets into neat piles,” Bongard says.

Their computer runs a simple evolutionary algorithm that initially generates random designs and rejects over 99 per cent of them – selecting only those designs capable of performing the required task in a virtual version of a petri dish.

As Bongard explains, the scientists still have to turn the finished designs into reality, layering and sculpting the cells by hand. This part of the process could eventually be automated, using 3D printing or techniques to manipulate cells using electrical fields.

You couldn’t yet call these xenobots living organisms, though, as they don’t, for example, eat or reproduce. Since they can’t utilise food, they also ‘die’, or at least decompose, and quickly, meaning there’s no obvious hazard to the environment or people.

However, combining this approach with more traditional synthetic biology techniques could lead to the creation of new multicellular organisms capable of performing complex tasks. For example, they could act as biodegradable drug delivery machines, and if made from human cells, they would also be biocompatible, avoiding triggering adverse immune reactions.

But that’s not all. “In future work,” says Bongard, “we’re looking at adding additional cell types, maybe like nervous tissue, so these xenobots would be able to think.”

5

Silicon Valley will try to go carbon negative

The tech world is hoping it can turn back the clock on climate change by removing carbon emissions.

A rapid shift away from using fossil fuels is what’s required if we’re going to keep the average global temperature rise within the 1.5°C window needed to mitigate the worst effects of climate change.

But that’s not all we can do. Instead of trying to limit our carbon emissions, there is scope to actually remove them from the atmosphere. That’s what Microsoft announced it would start doing, when the software giant kicked off 2020 by revealing its intention to be carbon negative by 2030. But that’s not all; Microsoft also said that by 2050, it plans to “remove from the environment all the carbon the company has emitted since it was founded in 1975.”

Achieving that goal will take more than simply switching to renewable energy sources, electrifying its fleet of vehicles and planting new forests. Hence, Microsoft is monitoring the development of negative emissions technologies that include bioenergy with carbon capture and storage (BECCS), and direct air capture (DAC).

BECCS uses trees and crops to capture carbon as they grow. The trees and plants are then burnt to generate electricity but the carbon emissions are captured and stored deep underground.

DAC uses fans to draw air through filters that remove the carbon dioxide, which can then be stored underground or potentially even turned into a type of low-carbon synthetic fuel.

Both methods sound promising but have yet to reach a point where they are practical or affordable on a scale necessary for them to have a significant impact on climate change. Microsoft’s hope, as well as those of everyone else looking to turn the tide of the climate crisis, is that these technologies, and others, will develop further over the years to come to a point that makes them viable.

6

Pests will be driven off without cruelty

Labs investigate gene drives to fend off invasive species like grey squirrels and cane toads

Another potential use for gene-editing is wiping out pests. Dubbed “gene drives”, self-replicating edits based on CRISPR technology could ravage through entire populations. In lab trials, the newly-introduced DNA often makes one sex sterile, duplicating itself to infect both copies of an animal’s chromosomes so that it’s passed on to all its offspring.

Some mosquitoes have developed resistance against gene drive mutations, but researchers believe they’ll be able to pull off the technique as long as they target the right genes. For safety, scientists are designing ‘override’ drives capable of reversing the edits.

In a 2018 paper, researchers from Edinburgh’s Roslin Institute, which created the first cloned sheep (“Dolly”), suggested gene drives could deal humanely with the Australian cane toad problem. The toxic toads were introduced from Hawaii in 1935 and have killed almost anything that has tried to eat them ever since. The same scientists propose controlling grey squirrels with gene drives, in order to save the UK’s native reds.

7

We will take mushrooms with us to space

Space missions test growing buildings out of fungus.

If we have to flee Earth to take up residence elsewhere in the galaxy, you know what we need to take with us? Mushrooms. Or rather, fungal spores. Not to feed us on the flight over there, but to grow our houses with.

That’s the thinking behind NASA’s myco-architecture project. The space agency is concocting a plan to grow buildings made out of fungi on Mars. According to astrobiologist Lynn Rothschild, who works on the project, it’s a no-brainer when you consider the cost of launching a full-size building into space, versus some practically weightless life-forms that happen to be natural builders. “We want to take as little as possible with us and be able to use the resources there,” she says.

Many fungi, like mushrooms, grow and spread using mycelia – networks of thread-like tendrils that form sturdy materials capable, with minimal encouragement, of growing to fill any container.

On Earth, fungi-fabricated structures are already used to make packaging for wine bottles and as particle board-like materials, and Rothschild suggests they could even be used for growing refugee shelters. On Mars, the organisms would need a little water to get started, which could come from melted ice, plus a food source.

The researchers envisage them being deployed in large bags that would be inflated on landing to provide a container to fill. These bags would contain the food source in dried form and offer the added benefit of preventing contamination of the atmosphere with alien fungi. Once the structures were fully grown, a heating element would be activated, baking the mycelium network like bread to harden it.

If you’re imagining organic-looking buildings with walls sprouting toadstools and orchids, though, think again. Rothschild’s current materials are more “like wholewheat bread that’s been left out”, although she says they could be brightened up by adding colour pigments, through genetic modifications.

Rothschild already has a myco-made stool in her office, which took her students about two weeks to grow, and the team has plans for full-scale structures. But for future space missions, they’d like to send an advance party of robots to do the work for them.

“When I travel, I want a hotel to go to,” says Rothschild. “I don’t want to arrive at an airport and they say ‘we’re going to build the hotel tonight’ and so I think the ideal situation would be to send precursor missions where these things were erected.”

8

Paralysed patients will walk again

Paralysed patients lucky enough to be enrolled in clinical trials are already walking again thanks to rapidly advancing neurotechnology.

In 2018, Swiss and UK scientists announced they had placed nerve signal-boosting implants into the spines of three men paralysed in road and sporting accidents. All are now able to walk a short distance.

And just last year, in a truly sci-fi-style demonstration, researchers at the Grenoble University Hospital in France used an exoskeleton to give a 28-year-old man back the use of his lower limbs after falling and breaking his neck. The man uses two 64-electrode brain implants to control the robo-suit.

9

Natural language gadgets will get weird

Speech-enabled tech, like Alexa, Siri or Google Voice, will start to shape our own speech

The fantasy of controlling our devices through speech is becoming a reality, even though they can only handle simple commands or enquiries and their speech patterns sound robotic. The next step is getting them to understand and respond in natural language – the sort of conversational exchanges humans use.

Google seemed to have made progress when it unveiled its Duplex system in 2018. An add-on for its Assistant app, Duplex employs more sophisticated types of AI to understand and use natural language to book restaurant tables and hair appointments, or ask about a business’s opening hours. If the booking couldn’t be made online, Assistant would handover to Duplex, which would call the restaurant and speak to the staff to book you in.

According to reports, people that spoke to Duplex said they didn’t realise they were talking to a machine. The trouble was, Duplex often ran into complications and needed someone to step in. Despite this setback, Google and other developers are still working on ways to bring natural language to our devices.

10

The human brain will be mapped

The plan to write a set of instructions for the human brain takes shape.

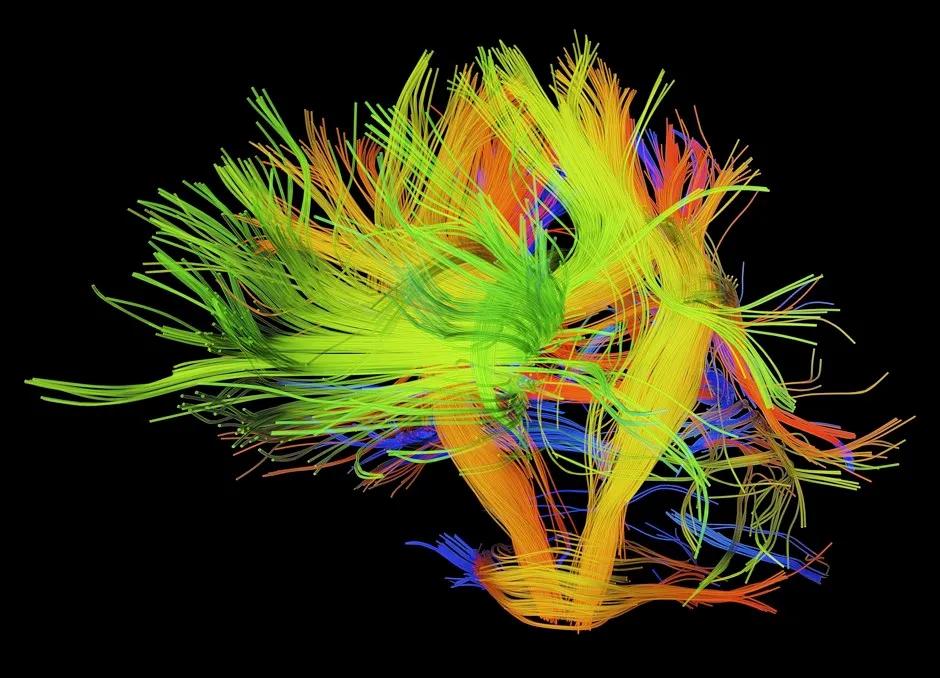

Understanding the human brain is a monumental task, but that hasn’t stopped neuroscience stepping up to meet the challenge. There’s the Human Brain Project, one of the largest ever EU-funded projects, the $5 billion BRAIN Initiative in the US, and the more recently announced China Brain Project.

One of the aims of the US initiative, launched in 2013, is to map all the neurons in the brain as well as their connections. Starting with the mouse brain, the view is to move towards the same goal in humans.

It could “help us crack the code the brain uses to drive behaviour,” says Joshua Gordon, one of the National Institutes of Health (NIH) project directors. But he admits it won’t happen overnight.

Take the Human Genome Project for example, a simple map won’t provide all the answers and it may take many years to figure out how the physical features of the brain relate to memories, thoughts, actions and emotions.

For a start, the brain’s ‘code’ can’t be written in a sequence of letters. According to Gordon, the first step is building a ‘parts list’ composed of different types of neurons and then mapping each of those parts in physical space.

Currently, the parts list for mice is well underway, whilst the human equivalent could take another five to 10 years. But understanding how these parts produce behaviour is trickier still.

“Each of those parts also then has a constellation of functions,” Gordon says. Eventually, there should be enough detail in the map to explain how neurons in certain brain circuits function at a molecular level, to produce specific behaviours.

The technologies being developed along the way will have a wider impact on neuroscience too, including research into a broad spectrum of brain disorders from epilepsy to Parkinson’s.

Rapid single-cell sequencing now allows scientists to quickly gather data from hundreds of thousands of individual neurons, highlighting the DNA that is switched on in each one. Meanwhile, imaging tools for studying neurons in exquisite detail and tracking their activities in real-time are advancing.

11

We will go to war with deepfakes

An arms race will pit AIs against each other to discover what's real and what’s not.

Deepfake videos have exploded online over the past two years. It’s where artificial intelligence (AI) is used to swap one person’s image in a photo or video, for another’s.

Deeptrace, a company set up to combat this, says in just the eight months between April and December 2019, deepfakes rocketed by 70 per cent to 17,000.

Most deepfakes, about 96 per cent, are pornography. Here, a celebrity’s face replaces the original. In its 2019 report, The State of Deepfakes, Deeptrace says the top four dedicated deepfake porn sites generated 134,364,438 views.

As recently as five years ago, realistic video manipulation required expensive software and a lot of skill, so it was primarily the preserve of film studios. Now freely-available AI algorithms, that have learned to create highly-realistic fakes, can do all the technical work. All anyone needs is a laptop with a graphics processing unit (GPU).

The AI behind the fakes has been getting more sophisticated too. “The technology is really much better than last year,” says Associate Professor Luisa Verdoliva, part of the Image Processing Research Group at the University of Naples in Italy. “If you watch YouTube deepfake videos from this year compared to last year, they are much better.”

Now there are huge efforts within universities and business start-ups to combat deepfakes by perfecting AI-based detection systems and turning AI on itself. In September 2019, Facebook, Microsoft, the University of Oxford and several other universities teamed up to launch the Deepfake Detection Challenge with the aim of supercharging research. They pooled together a huge resource of deepfake videos for researchers to pit their detection systems against. Facebook even stumped up $10 million for awards and prizes.

Verdoliva is part of the challenge’s advisory panel and is doing her own detection research. Her approach is to use AI to spot tell-tale signs – imperceptible to the human eye – that images have been meddled with.

Every camera, including smartphones, leaves invisible patterns in the pixels when it processes a photo. Different models leave different patterns. “If a photo is manipulated using deep learning, the image doesn’t share these characteristics,” says Verdoliva. So, when these invisible markings have vanished, chances are it’s a deepfake.

Other researchers are using different detection techniques and while many of them can detect deepfakes generated in a similar way to the ones in their training data, the real challenge is to develop a stealthy detection system that can spot deepfakes created using entirely different techniques.

The extent to which deepfakes will infiltrate our lives in the next few years will depend on how this AI arms race plays out. Right now, the detectors are playing catch-up.

12

Brain-machine interfaces will change the way we work (and walk)

Exoskeletons will help the paralysed walk again and keep factory workers safe.

Part of technology’s promise is that it will enable us to exceed our natural capabilities. One of the areas where that promise is most apparent is brain-machine interfaces (BMIs), devices, implanted into your brain, that detect and decode neural signals to control computers or machinery by thought.

Perhaps the best example of BMIs’ potential came in October 2019 when paralysed Thibault used one to control an exoskeleton that enabled him to walk.

What’s currently holding BMIs back, however, is the number of electrodes that can be safely implanted to detect brain activity and that, being metal, the electrodes can damage brain tissue and will eventually corrode and stop working.

But last July, tech entrepreneur Elon Musk announced his company, Neuralink, could provide a solution. Not only does the Neuralink BMI claim to use more electrodes, they’re carried on flexible polymer ‘threads’ that are less likely to cause damage or corrode.

But it’s difficult to know for sure how realistic these claims are, as the company has remained tight-lipped about the technology. Furthermore, it’s yet to be trialled in humans.

Even without BMIs, exoskeletons are already being used to augment human capabilities, particularly for people whose capabilities might be limited as a result of illness or injury.

At Hobbs Rehabilitation in Winchester, specialist physiotherapist Louis Martinelli uses an exoskeleton that straps on to a patient’s back, hips, legs and feet to help them stand and step.

“If the patient has had a really severe spinal cord injury, this is the only way to get them up and stepping sufficiently across the room,” he says. “It’s been shown to be really beneficial, particularly for blood pressure management, reducing the risk of vascular diseases, and bladder and bowel function.”

With the exoskeleton, only one to two physiotherapists are needed to assist the patient rather than a team of four or more. But it also allows the patient to achieve a lot more – taking several hundred steps during a session instead of the 10-20 with conventional therapy. There are potential applications elsewhere – upper body exoskeletons are being trialled in a US Ford manufacturing plant to help people carry heavy car parts.

But as useful as lower-body exoskeletons are, they’re unlikely to replace wheelchairs anytime soon. That’s partly because they struggle with uneven surfaces and can’t match walking speed, but also because they’re so much more expensive.

Wheelchair prices start in the region of £150, whereas an exoskeleton can set you back anywhere between £90,000-£125,000. This is why Martinelli would like to see the technology get a little simpler in the years to come.

“What I’d like to see is the availability of these pieces of equipment increase because they’re very expensive. For individuals to get access to an exoskeleton is really difficult, maybe a simpler version that was half the price would allow more centres or more places to have them.”

13

Machines will track your emotions

Amid ethical concerns, scientists will strive to help AI read feelings.

Emotion AI aims to peer into our innermost feelings - and the tech is already here. It’s being utilised by marketing firms to get extra insight into job candidates.

Computer vision identifies facial expressions and machine learning predicts the underlying emotions. Progress is challenging, though, reading someone’s emotions is really hard.

Professor Aleix Martinez, who was involved in the research, sums it up neatly, “not everyone who smiles is happy, and not everyone who is happy smiles.”

He is investigating whether emotional AI can measure intent - something central to many criminal cases. “The implications are enormous,” he says.

14

Your AI psychiatrist will see you now

Social and health care systems are under pressure wherever you are in the world. As a consequence, doctors are becoming increasingly interested in how they can use smartphones to diagnose and monitor patients.

Of course a smartphone can’t replace a doctor, but given these devices are with us at almost every moment of the day and can track our every action, it would be remiss to use this ability for good.

Several trials are already under way. MindLAMP can compare a battery of psychological tests with health tracking apps to keep an eye on your wellbeing and mental acuity. The screenome project wants to establish how the way you use your phone affects your mental health, while an app called Mindstrong says it can diagnose depression just by how you swipe and scroll around your phone.

Read more about artificial intelligence:

- Mind design: could you merge with artificial intelligence?

- What artificial languages can tell us about ourselves

- The rise of the conscious machines: how far should we take AI?

15

We will set foot on the Moon (and maybe Mars)

Will we see astronauts set foot on the Moon in the next decade? Probably. What about Mars? Definitely not. But if NASA’s plans come off, astronauts will be visiting the Red Planet by the 2030s.

There is no doubting NASA’s aspirations to plant astronaut feet on Mars. In one of its reports, NASA’s Journey to Mars, they explain that the mission would represent “the next tangible frontier for expanding the human presence.”

The plan is to use the Moon and a small space station in orbit around the Moon, Lunar Orbital Platform-Gateway, as a stepping stone, allowing the space agency to develop capabilities that will help with the 34-million-mile journey to the Red Planet.

An independent report into NASA’s Martian ambitions lays out a timetable that includes astronauts setting foot on the Moon by 2028, and a mission to orbit Mars less than a decade later, by 2037.

16

Privacy will really matter

After spending much of the last decade handing our data over to the likes of Apple, Facebook and Google via our smartphones, social media and searches, it seems as though people around the world, and the governments that represent them, are wising up to the risks of these corporations knowing so much about us.

The next 10 years looks to be no different, only now we can add fingerprints, genetic profiles and face scans to the list of information we hand over. With the number of data breaches – companies failing to keep the data they hold on us secure – climbing every year, it’s only a matter of time before governments step in, or as with the case of Apple, tech companies start selling us back the idea of personal privacy itself.

17

The internet will be everywhere

Between 5G networks, and internet beamed down to us from Elon Musk’s StarLink satellites, mobile internet will get a lot faster and a lot more evenly spread over the next decade.

These new networks will empower entirely new fields of tech, from driverless cars, drone air traffic control to peer-to-peer virtual reality. But it isn’t without it’s drawbacks.

SpaceX is planning to launch 12,000 satellites over the next few years to create its StarLink constellation, with thousands more being deployed by other companies. More satellites mean more chances of collisions and more space debris as a result. The satellites have also been shown to interfere with astronomical observations and weather forecasting.

18

Underground cities will rise

Earthscrapers could help provide living, office and recreational space for ever-increasing urban populations.

As populations move away from rural areas, urban planners look beneath their feet for answers

With space in cities so limited, often the only option for those who can afford to expand their property is to go underground. Luxury basements are already a feature beneath many homes in London, but with urban populations set to continue growing, subterranean developments are beginning to appear on a much larger scale.

One idea, still at the concept stage, is the ‘Earthscraper’ proposed for Mexico City. This 65-storey inverted pyramid has been suggested as a way to provide office, retail and residential space without having to demolish the city’s historic buildings or breach its 8-storey height restriction.

Many questions remain as to the feasibility of such a project, however, such as how you provide light, remove waste and protect people from fire or floods. Some of these questions have potentially been answered with the construction of the Intercontinental Shanghai Wonderland hotel in China. This 336-room luxury resort was built into the rock face of an 88m-deep, disused quarry that opened for business in November 2018.

The island city-state of Singapore is also exploring its underground options. Not only are its Jurong Rock Caverns in the process of being turned into a subterranean storage facility for the nation’s oil reserves, but there are also plans to build an ‘Underground Science City’ for 4,200 scientists to carry out research and development.

In New York, the Lowline Project is turning an abandoned subway station into a park. Expected to open in 2021, it uses a system of above-ground light-collection dishes to funnel enough light into the underground space to grow plants, trees and grass.

19

We will continue to search for extra-terrestrial life

The European Space Agency’s mission to Jupiter and its moons, JUICE, could be our best bet of finding alien life in our Solar System.

If all goes according to plan, in May 2022 the European Space Agency will launch the first large-class mission of its Cosmic Vision Programme. The JUpiter ICy moons Explorer (or JUICE) will slingshot around Earth, Venus and Mars, picking up the speed it needs to propel it out to Jupiter.

JUICE is expected to arrive at the gas giant in 2029, where it will begin possibly the most detailed study of the planet to date.

“There are two goals,” explains Dr Giuseppe Sarri, the JUICE project manager. “One is to study Jupiter as a system. Jupiter is a gas giant with over 70 moons, and for our understanding of the formation of the Solar System, studying [what amounts to] a mini solar system is scientifically useful. We’ll study the atmosphere, magnetosphere and satellite system.

“The second goal is to explore the three icy moons, Callisto, Ganymede and Europa. Because on those moons there could be conditions that can sustain life, either in the past, present or maybe in the future.”

It’s important to note that JUICE won’t be searching for signs of life on these moons, just the appropriate conditions to support it. In other words, to confirm the presence of salty, liquid water below the surface ice.

“It’s a little bit like below Antarctica. In the water below the ice there are very primitive forms of life so conditions could be similar to what we have below our poles,” says Dr Sarri.

“If there’s a chance to have life in our Solar System, Europa and Ganymede are the places. Unfortunately JUICE won’t be able to see the life but it’ll take the first step in looking for it.”

JUICE may also shed light on the mystery of rings. “It looks as if all the giant planets have rings,” Dr Sarri explains. “In the past, astronomers only saw Saturn’s rings but then rings were found at Uranus, Jupiter and Neptune. Understanding the dynamic of rings will help us understand the formation of these planets.”

Read more about the search for extra-terrestrial life:

- Alien contact: a brief history of extraterrestrial languages

- The weird worlds alien life could potentially survive on

- First contact: what if we find alien life?

20

Quantum computers will gain supremacy over supercomputers

Complex data, like weather patterns or climate changes, will be crunched though in the fraction of the time.

Dreams of exploiting the bizarre realm of quantum mechanics to create super-powerful computers have been around since the 1980s.

But in 2019 something happened that made lots of people sit up and take quantum computers seriously. Google’s quantum computer, Sycamore, solved a problem that would take conventional computers much, much longer.

In doing so, Sycamore had achieved ‘quantum supremacy’ for the first time – doing something beyond conventional capabilities.

The task Sycamore completed, verifying that a set of numbers were randomly distributed, took it 200 seconds. Google claims it would have taken IBM’s Summit, the most powerful conventional supercomputer, 10,000 years. IBM begs to differ, saying it would only take Summit 2.5 days.

Regardless, this landmark event has given the quantum computer research community a shot in the arm. A blog post by Sycamore’s developers gives a sense of this. “We see a path clearly now, and we’re eager to move ahead.”

But don’t expect to be using a quantum computer at home. It’s more likely to be running simulations in chemistry and physics, performing complex tasks such as modelling interactions between molecules and in doing so, speeding up the development of new drugs, catalysts and materials.

In the longer term, quantum computers promise rapid advances in everything from weather forecasting to AI.